New work on how to construct neural network layers on composite objects of scalars, vectors, bivectors, … --> multivectors! Via Clifford algebras, we generalize convolution and Fourier transforms to multivectors, especially relevant for PDE modeling:

13

178

680

Replies

Work done as a close collaboration with

@vdbergrianne

and

@wellingmax

, at our new AI4Science team at Microsoft Research

@MSFTResearch

Amsterdam, and...

@rejuvyesh

at Microsoft Autonomous Systems and Robotics Research Group.

2/n

1

3

31

In sciences, PDEs describe physical processes as scalar and vector fields interacting and coevolving over time. We want neural PDE surrogates to take into account the relationship between different fields and their internal components, via so-called multivectors.

3/n

1

3

24

In Clifford algebras, different spatial primitives can be summarized into such multivectors.

A multivector does not only have scalar, and vector components. But also has plane (bivector) and volume (trivector) primitives.

4/n

1

4

27

An example of a bivector is the cross-product of two vectors in 3d, which is a plane segment spanned by these two vectors, and is often represented as a vector itself. But the cross-product has a sign flip under reflection which a true vector does not. It is a bivector.

5/n

1

3

27

We introduce Clifford neural layers, both for convolution and for Fourier operations, that handle scalar (e.g. density), vector (e.g. wind/electric field), bivector (e.g. magnetic field) and higher order fields as proper geometric objects termed as multivector fields.

6/n

1

3

25

And that is the cool thing: we can build neural networks on top of this concept of multivectors😊If there are physical connections between fields, e.g. between electric and magnetic fields, Clifford layers give you a handle on how to encode these.

7/n

1

2

26

We introduce Clifford convolution: instead of convolving over channels of feature maps, we convolve over channels of multivector feature maps using multivector kernel.

8/n

1

2

24

We introduce Clifford Fourier transforms: instead of Fourier transforming feature maps, we Fourier transform multivector feature maps.

9/n

1

2

21

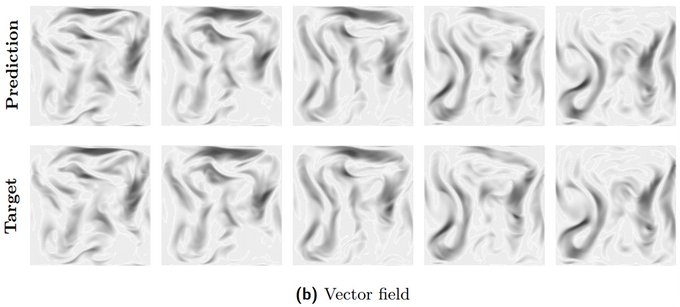

We therefore evaluate on fluid dynamics (Navier-Stokes equations) where scalar smoke and vector velocity fields form multivectors, coupled over force terms.

10/n

1

1

20

We further evaluate on weather forecasting models where scalar pressure and vector wind velocity fields form multivectors.

11/n

1

2

18

We replace each convolution with a Clifford convolution in ResNet architectures, and each Fourier Neural Operator layer with a Clifford Fourier layer in Fourier Neural Operators (FNOs):

12/n

1

1

20

We finally evaluate on Maxwell’s equations in 3 dimensions, which beautifully illustrate the multivector nature of electromagnetism. This is because the magnetic field is the dual (bivector) of the electric field (vector).

13/n

1

1

17

For all these datasets, we experiment with different number of training trajectories. Clifford neural layers consistently improve generalization capabilities of the tested architectures.

1

2

16

But… in the end, it seems we have just touched the tip of the iceberg when it comes to marrying geometric algebra and deep learning. There is much more to harness :)

3

2

43

@jo_brandstetter

How does this compare with

@mpspells

's work on Clifford Algebra with attention for geometric deep learning?

1

0

11

@andrewwhite01

@mpspells

Thank you very much for forwarding this paper. We did our best to cite as much as possible, seems we overlooked this important one. This is a nice piece of work!

@mpspells

builds multivectors by applying vector-vector operations, e.g. uses the cross-product to obtain

1/2

1

0

10

@jo_brandstetter

I look forward to Clifford Algebras being a hot topic of research in ML. I've been looking for the past year but from a basic data modeling lens.

A big limitation is the exponential growth of dimension in degree. Do you restrict yourself up to 3-vectors?

1

0

3

@alkalait

We need less channels if we use multivector objects. In 2D and 3D experiments, Clifford networks performed better for the same nr of params. For 4D and higher, you are right, there might be a tradeoff which needs to be explored :)

0

0

2