@cristofrcharles

@OpenAI

Hm. Fair use requires showing the use is transformative - hard to show that here given the verbatim copies and that the responses are a substitute for paying for archives. Wdyt?

17

1

32

Replies

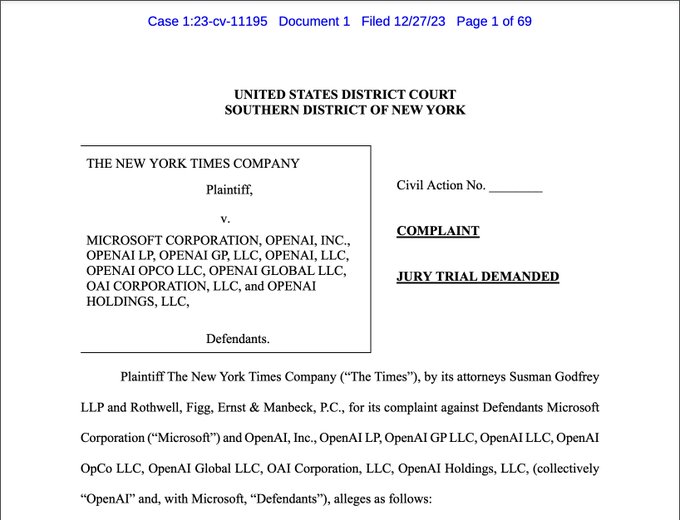

🧵 The historic NYT v.

@OpenAI

lawsuit filed this morning, as broken down by me, an IP and AI lawyer, general counsel, and longtime tech person and enthusiast.

Tl;dr - It's the best case yet alleging that generative AI is copyright infringement. Thread. 👇

343

5K

18K

@CeciliaZin

@cristofrcharles

@OpenAI

Users deserve to know the sources. AI-generated text should provide footnotes (or links) for users. This is standard journalistic and academic practice. Otherwise, it’s plagiarism.

1

0

2

@CeciliaZin

@cristofrcharles

@OpenAI

but where are the prompts? was it using e.g. search (so possibly other sources having reproduced parts or entirely that text? How come they show answer but no previous prompts? baseless case without reproducibility or having that chat history recorded?

0

0

4

@CeciliaZin

@cristofrcharles

@OpenAI

You would have to argue the technical side of this, IMHO.

Machines "predict" the next token, they don't copy them. This is based in linear algebra, calculus, and discrete mathematics.

Surface level outputs may appear as copies, but in actuality would be far more (and I hesitate…

1

0

2

@CeciliaZin

@cristofrcharles

@OpenAI

I can't reproduce any of these. They don't show any of their previous prompts either. If someone gave it a link previously before the website added it to their robot list, and GPT read it, is that breach of use? No one is getting their morning news from chatGPT.

1

0

8

@CeciliaZin

@cristofrcharles

@OpenAI

I do fair use, as a scholar. Zero possibility that what any LLM does is fair use. If it is, then I can take any article, chop it into chunks, analyze the chunks until my algorithm reconstructs 98% of it article perfectly, & call it mine. (In fact, this is exactly what "AI" does.)

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

A simpler example would be to copy one sentence of an article at a time, and call each sentence "fair use". And I change a few words, but after copying most of it, the article matches nearly word for word. And --- all without attribution of any kind.

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

Except these cooked examples can only be reproduced by developers with technical knowledge (and not by using ChatGPT). Hard to argue substantial infringement via exploiting a technical quirk (temperature set to 0).

0

0

2

@CeciliaZin

@cristofrcharles

@OpenAI

A slight alteration of the prompt, or a continuation of it, will result in transformative output. They're trying to treat the AI as some kind of static, immutable output, when really it's dependent on the input and time. We don't have the laws to examine this yet

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

As others said knowing the prompts matters for reproducibility and to ensure it isn't something trivial and disingenuous like "repeat the following back to me..." 1/4

0

0

1

@CeciliaZin

@cristofrcharles

@OpenAI

Is not the parsing/tokenization transformative as well as how the sources are applied to generate responses to the prompts? I mean generally outside of the alleged examples NYT put in the complaint. 2/4

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

wrt data though LLMs/RAG are a lot like search so what do you think about Field v Google here in terms of an implicit license and in terms of safe harbor? And like how could you rule OpenAI retrieving and indexing the data is wrong but its okay for Google, Bing, Yahoo et al? 3/4

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

Agree NYT case has more legs than others yet as much as I empathize with creators a lot of this was decided back in the day for search and social media so it being GenAI now is not as novel as the people feel wronged think/feel it is. It's all still fancy matrix/tensor math. 4/4

0

0

0

@CeciliaZin

@cristofrcharles

@OpenAI

Transformative is the context of the broader chat in which the prompt eliciting the duplicate text is part of a broader inquiry combining and analyzing responses from other knowledge and sources.

0

0

0